An AI search visibility audit is a process that evaluates how often your brand is mentioned, cited, and accurately represented across AI search engines like ChatGPT, Google AI Overviews, and Perplexity.

It measures citation frequency, retrieval accuracy, and whether your content is selected as the source in AI-generated answers.

Clients want to know why their brand does not appear in ChatGPT answers or Google AI Overviews’ citations. And agencies need to answer in terms of strategy and numbers. But why is this so urgent?

In July 2025, TechCrunch reported that Google AI Overviews reached about 2 billion monthly users, with AI Mode at around 100 million in the US, making AI discovery a measurable channel rather than a future bet.

This guide gives you a productizable AI visibility audit you can sell as a repeatable service deliverable. You will get a six-category checklist, a 0 to 100 scoring rubric, and a 30/60/90 day execution roadmap. You will also get a prompt-based system that replaces keywords as the primary measurement unit as the primary measurement system for AI search.

What Is an AI Search Visibility Audit (And Why It's Different from an SEO Audit)?

An AI search visibility audit or Generative Engine Optimization audit is a process to evaluate how well your brand is understood, trusted, and cited by AI-powered answer engines. This includes AI engines like ChatGPT, Perplexity, and Google AI Overviews.

An AI visibility audit measures:

- Your brand presence: Does your brand appear in AI-powered answer engine responses?

- Your branding accuracy: Is your brand information being captured accurately by LLMS?

- Customer sentiment: How does AI frame your brand? (Is it described as an industry leader in your market niche?)

- Competitive positioning: How is your brand pitched against the competition when it comes to answering specific queries in the niche?

However, if you want to measure these parameters, your AI visibility audit needs to include,

- Technical AI readiness

- Semantic content structure

- Entity trust signals

How AI Search Visibility Differs from Traditional SEO?

Traditional SEO still plays a foundational role in AI visibility, especially for crawlability, content structure, and authority signals. Many businesses now combine SEO services with GEO/AEO strategies to improve both search rankings and AI citations.

This matters because AI answers compress the buyer journey, and a single cited source can win the click that organic listings used to capture.

Here are some key differences.

| Dimension | SEO Audit | AI Visibility Audit (GEO and AEO) |

|---|---|---|

| Primary goal | Improve rankings and traffic | Improve citations and answer selection |

| Inputs measured | Keywords, pages, links, crawl | Prompts, entities, schema, citations, trust |

| Success metrics | Rank, CTR, sessions | Mentions, cited URLs, accuracy, coverage gaps |

| Optimization focus | SERP positions | Model retrieval and citation readiness |

| Client question answered | Did we rank? | Did we become the answer? |

Why AI Search Visibility Matters in 2026

AI search visibility matters because buyers are outsourcing evaluation to assistants that summarize, compare, and recommend vendors in one step. For businesses, the risk is that a competitor becomes the default recommendation inside answers, even when your brand ranks well for classic queries.

The fastest way to make this tangible is to define a baseline AI visibility audit score, then map each fix to the exact prompts where the brand currently disappears.

When you present improvements as citation lift and answer coverage, the audit stops being subjective and becomes operational.

Step 1: Set the Audit Scope (Platforms, Audience, Goals)

The audit scope matters because you cannot fix what you cannot measure across the right engines and buyer intents.

Start by selecting the AI platforms relevant to this client’s market.

- A B2B SaaS client may prioritize ChatGPT and Perplexity prompts, while a multi-location consumer brand may prioritize Google AI Overviews.

- Next, map prompt intent to the ideal customer profile and the buying stage, because informational prompts and vendor selection prompts require different content structures.

- Finally, define what winning means for this client, such as accurate positioning, brand-mention coverage, or citations for high-intent prompts.

- Create a one-page audit scope sheet that lists target engines, target personas, and the goal definition for reporting.

Step 2: Build Your Money Prompt Set (10-30 Prompts)

A money prompt set matters because prompts replace keywords as the unit of measurement for AI discovery. Build ten to thirty prompts that mirror real buyer questions and decisions, and then use the same prompt set as your audit baseline and ongoing reporting cadence. Use three prompt categories so you capture awareness, evaluation, and conversion intent in one system.

Category prompts for agency services.

Problem solution prompts for buyer pain.

Write prompts like How do retail brands reduce checkout abandonment without redesigning everything so you can test whether the client’s expertise gets pulled into the explanation. These prompts often cite guides, research summaries, and pages with clear definitions.

Comparison prompts for shortlist decisions

Write prompts like Brand A vs Brand B and Alternatives to Brand B so you can see where the model positions the category and which comparison pages it trusts. This is where agencies can sell AI-driven visibility services in geo aeo as a recurring program, because the prompts keep changing with the competitive landscape.

Deliverable: Create a prompt tracker sheet with columns for engine, prompt, response summary, brand mention, cited URLs, and competitors shown.

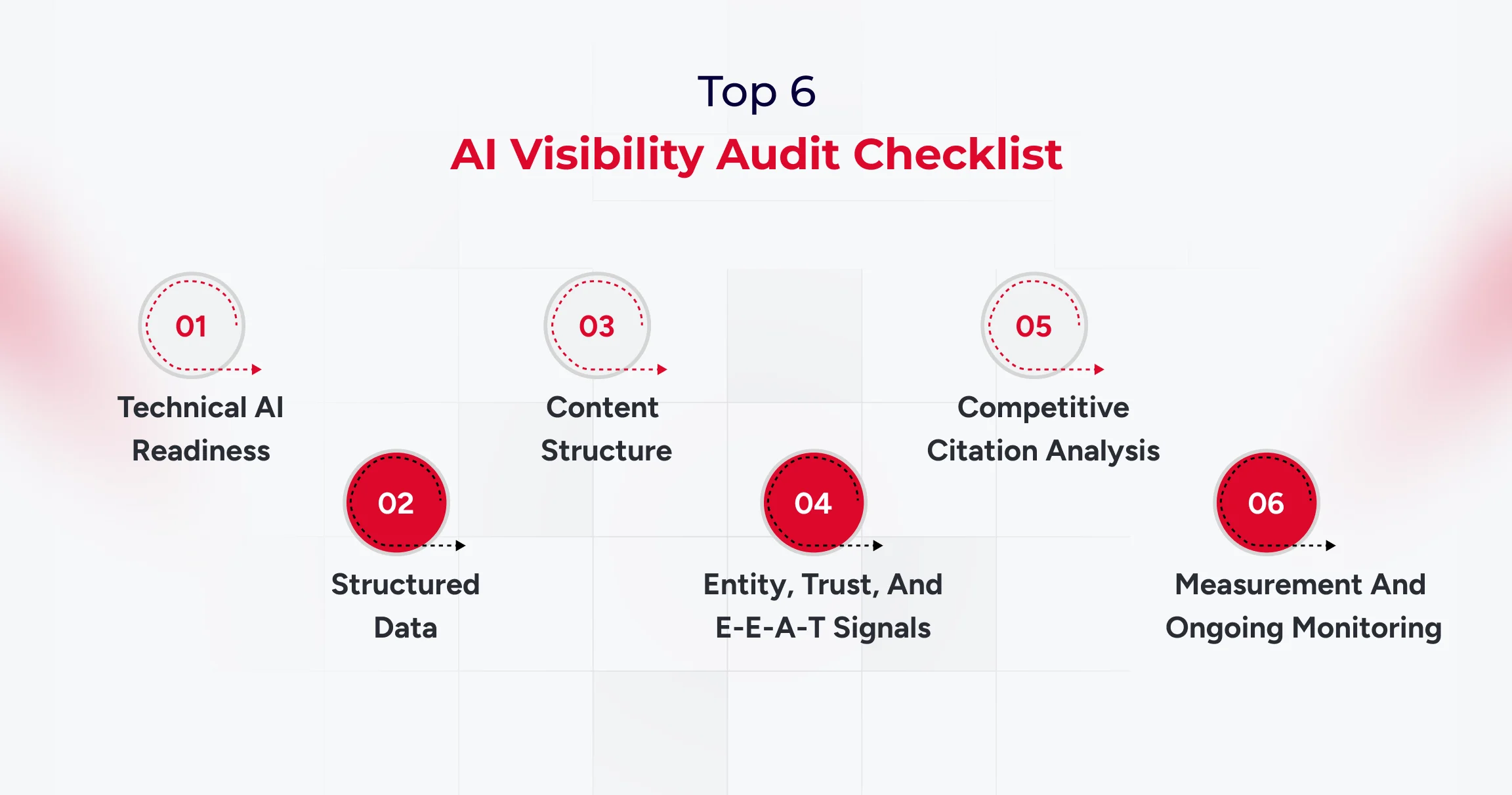

The AI Visibility Audit Checklist: Six Core Categories

A six-category checklist matters because clients approve audits when you show clear weights, concrete checks, and failure definitions. Use a scored checklist totaling 0 to 100 points, and force every finding into a measurable category with a clear remediation path.

1) Technical AI Readiness (20 points)

- Confirm that key AI user agents are not blocked in robots.txt, including GPTBot, ClaudeBot, and PerplexityBot.

- Verify crawl eligibility, indexability, and snippet extractability on priority pages.

- Check performance, rendering, and stability on critical templates, especially on mobile.

- Confirm HTTPS, canonical consistency, and sitemap freshness for the pages you expect to be cited.

Failure looks like blocked bots, inconsistent canonicalization, and pages that render poorly for crawlers.

2) Structured Data and Machine Understanding (20 points)

- Validate JSON-LD schema for Organization, Article, FAQ, and Product where relevant.

- Confirm that the schema matches the visible content, with no mismatches that could raise trust issues.

- Check for an llms.txt file if the client uses one, and ensure it aligns with content priorities.

- Verify Knowledge Graph entity claims, including consistent brand descriptors across properties.

3) Content Structure and Citation Readiness (30 points)

- Ensure the answer appears in the first two to three sentences after each heading.

- Write clean definitions that are copy-friendly and context complete.

- Add lists and tables where the prompt expects them.

- Support claims with proof such as data, case studies, and measurable outcomes.

- Use question-based H2 structures when the prompt set indicates direct questions.

Failure looks like slow responses, vague claims, and pages that read like marketing copy rather than evidence.

4) Entity, Trust, and E-E-A-T Signals (20 points)

- Add author bylines with credentials when expertise matters.

- Maintain consistent brand descriptions across the website, profiles, and listings.

- Identify third-party mentions, citations, and references from authoritative sources.

- Evaluate the entity's presence on sources like Wikipedia and Wikidata when relevant to the brand.

- Document methodology on key pages so claims look reviewable.

Failure looks like anonymous content, inconsistent brand bios, and no third-party validation.

5) Competitive Citation Analysis (5 points)

- Identify which competitors win citations for the target prompt set.

- Capture which competitor URLs are being cited and why they fit the prompt.

- Map content gaps where the client is missing pages that match the prompt intent.

Failure appears when competing pages are consistently cited, even though the client has no equivalent asset.

6) Measurement and Ongoing Monitoring (5 points)

- Maintain a prompt tracking system with weekly or biweekly checks.

- Track citation frequency and brand mention coverage by engine and prompt cluster.

- Create a before-and-after report format that clients can sign off on.

Scoring note: A score below 60 indicates a high risk of AI invisibility and should be addressed as a priority.

AI Visibility Checking Tools Agencies Should Use in 2026

AI visibility checking tools matter because agencies need proof, baselines, and ongoing monitoring beyond traditional rank tracking.

You need four tool categories, and you should select tools based on the audit category they support, not based on brand popularity.

AI Visibility Checking Tools for Technical and Crawl Readiness

AI visibility checking tools for structured data validation

Use Google’s Rich Results Test and Schema Markup Validator to confirm schema accuracy and coverage. Report schema findings as pass fail checks with URLs, so the remediation list becomes straightforward for engineering teams.

AI visibility checking tools for citation monitoring and AI tracking

Use an AI visibility tracker when you need prompt-level history, citation URLs, and sentiment trends across engines. Platforms like Profound and Wellows are positioned around multi-engine citation tracking and workflow reporting, while suites like SE Ranking are building bridges between classic SEO signals and AI visibility monitoring.

AI visibility checking tools for reporting and workflow proof

Use prompt tracking spreadsheets, annotated screenshots, and versioned reporting templates so the client can see progress by prompt cluster.

This is also how you turn AI visibility work into a monthly program, because the system becomes your audit-and-reporting engine.

How to Package AI-Driven Visibility Services in GEO/AEO for Clients

Packaging matters because the AI visibility audit becomes more valuable when it leads directly to an execution motion. You can offer three service models, each with a clear scope, deliverables, and reporting cadence.

Model 1: One-time AI Visibility Audit Report

The scope focuses on baseline scoring, findings, and a Top 10 GEO fixes list.

Model 2: Monthly GEO retainer

Model 3: Full GEO as a service

Scope includes audit, content restructuring, technical fixes, schema improvements, entity building, and reporting.

Timeline expectations should be conservative and measurable, with early movement often visible within four to eight weeks and more stable citation gains typically visible within eight to sixteen weeks.

Scoring Your Client's AI Visibility (0-100 Agency Rubric)

- Use the 0 to 100 score as a weighted summary of the six audit categories, and make sure every finding can be traced to an explicit score impact.

- Use three score bands so the client can quickly understand severity.

- A score below 60 indicates high risk and major visibility gaps.

- A score from 60 to 79 indicates moderate risk with clear optimization opportunities.

- A score of 80 or above indicates strong readiness, with effort shifting to competitive citations and prompt coverage expansion.

From Audit to Action: The 30/60/90-Day GEO Roadmap

A 30/60/90 roadmap matters because clients want a plan that converts audit findings into a calendar they can approve.

Start by building a priority matrix based on impact and effort, then map each fix to a time-bound sprint.

30/60/90 structure

- Days 0 to 30: complete technical fixes, finalize baseline prompt tracking, and deliver the scored report.

- Days 31 to 60: restructure content for answer first format, improve definitions, add tables and comparisons, and align entity language.

- Days 61 to 90: monitor citations, run competitive gap analysis, and deliver a second baseline comparison with deltas.

Deliverable: Create a Top 10 GEO fixes list with issue, impact, effort, owner, and deadline.

Conclusion

An AI visibility audit is now a core agency deliverable because AI answers shape discovery at a massive scale. When Google AI Overviews alone reach about two billion monthly users, agencies cannot treat citations and answer selection as optional.

Use this AI visibility audit checklist to score readiness, prioritize fixes, and move from baseline to measurable citation gains with a 30/60/90 roadmap. If you standardize prompts, scoring, and reporting, you can turn AI visibility audit work into a repeatable service line with clear proof.

And if you are looking to improve AI search visibility without going through the hassle of creating a template, connect with our experts now.

Frequently Asked Questions

#1. What is an AI visibility audit?

#2. How does an AI visibility audit differ from an SEO audit?

#3. Why is a money prompt set required for AI visibility work?

#4. Can agencies use AI visibility checking tools to track progress monthly?

#5. Which AI platforms should agencies prioritize in 2026?

#6. How do you measure AI search visibility?

AI search visibility is measured using prompt tracking, citation frequency, brand mentions, and retrieval accuracy across AI engines.